Explainable AI in Finance: What It Is, Why It Matters, and How FP&A Teams Can Use It

By Robert Boyter |

Last Updated: April 13, 2026

By Robert Boyter |

Last Updated: April 13, 2026

Finance runs on numbers that need to be defended, to boards, auditors, regulators, and investors. Now that AI is generating many of those numbers, a new question has emerged: can your team actually explain what the AI did and why?

The 2025 IIF-EY Annual Survey on AI/ML Use in Financial Services found that 18% of financial institutions cited explainability and black-box concerns as the top issue raised by their supervisors during regulatory engagement, making it the single most common regulatory flashpoint ahead of bias (13%) and transparency (16%). If your team is using AI-powered planning tools, explainable AI in finance isn't a compliance checkbox. It's a business-critical capability.

Most conversations about AI explainability focus on banks, credit scoring, and lending algorithms. But the challenge is just as real, and just as urgent, for mid-market financial planning and analysis (FP&A) teams.

When your AI-powered planning tool flags an unusual variance, revises a revenue forecast, or surfaces a cash flow anomaly, someone in the room will ask: "Why did it say that?" If your answer is "the model flagged it," that's not enough for a CFO, an audit committee, or a regulator. It's not even enough for your own team to act with confidence.

This article gives you a practical foundation. You'll walk away with:

Whether you're already using AI in your planning process or actively evaluating tools, this guide will help you ask the right questions, and give better answers when leadership asks them of you.

Explainable AI (XAI) refers to artificial intelligence systems designed so that their outputs—predictions, decisions, classifications—can be understood, interpreted, and audited by humans. It's the difference between an AI that tells you what it concluded and one that also tells you why.

The contrast becomes concrete fast.

Say your AI planning tool generates a Q3 revenue forecast of $4.2 million. A black-box model delivers that number with no supporting logic. When your CFO asks what drove it, the system has no answer. Was it seasonality? Pipeline velocity? Macro conditions? Nobody knows.

An explainable AI system generates the same forecast but surfaces the reasoning: "Revenue weighted 60% on historical seasonality, 25% on current pipeline velocity, 15% on trailing headcount trends." Now your CFO can challenge assumptions, your FP&A team can adjust inputs, and you can walk a board through the logic without flinching.

Explainable AI methods generally fall into two categories:

Most AI systems in FP&A tools today rely on post-hoc methods because more complex XAI models tend to be more accurate, and post-hoc tools make them interpretable. One important caveat: post-hoc explanations are approximations, not a complete picture of everything the model did. It's a meaningful window into the model's reasoning but not a full blueprint.

Difference between black box AI and explainable AI

Understanding what explainable AI in finance is and how it works is only half the picture. The more pressing question for finance leaders is: why does it matter right now, and what's actually at stake if your AI tools can't explain themselves?

The answer cuts across four dimensions: regulatory pressure, board accountability, model integrity, and organizational trust. Each one is reason enough on its own. Together, they make explainability one of the most consequential capabilities a finance team can evaluate in an AI tool today.

Regulators aren't waiting. The EU AI Act flags credit scoring, lending, and risk assessment AI as high-risk, and from August 2, 2026, explainability is a legal requirement, not a nice-to-have.

GDPR already gives individuals the right to ask why an automated system made a decision about them. In the U.S., the Equal Credit Opportunity Act requires lenders to give specific reasons for turning someone down. The consequences of getting this wrong are real: in 2019, Apple Card faced regulatory investigation after its algorithm was found to offer women lower credit limits than men with identical financial profiles. The model wasn't auditable, and by the time anyone noticed, the reputational damage was already done.

That external pressure doesn't stop at the regulator's door. It follows the numbers all the way into your board room. Here's the scenario most FP&A leaders dread: you're in a board meeting, the AI flagged an unusual variance, and someone asks "how did it arrive at that?" If you can't answer, you lose the room, and your credibility takes the hit, not the model.

This isn't a hypothetical risk. Gartner found that 58% of finance functions were already using AI in 2024, rising to 59% of CFOs by 2025. AI is everywhere in finance now. The teams that will thrive are the ones who can explain what it's doing, not just report what it said.

But AI explainability isn't just about satisfying external scrutiny. It also makes your team smarter. A bad assumption buried in an AI model doesn't stay buried. It shows up in every forecast, every variance flag, and every anomaly alert, quietly, until it doesn't.

Gartner's research predicts 60% of AI projects without AI-ready data will be abandoned through 2026 and MIT research shows that 95% of corporate AI initiatives fail. Explainability is your early warning system. When you can see why the model flagged something, you can catch a data problem at the source instead of presenting a flawed forecast to your CFO.

And when your team does understand the reasoning, something shifts. Here's a stat worth sitting with: According to Tipalti's 2025 AI in Finance Report of 500 finance professionals, 52% said they still need stronger AI governance frameworks meaning most teams are operating with AI outputs they can't fully stand behind. That gap is where trust breaks down.

And leadership can sense that uncertainty. When people understand the reasoning behind an AI output, they're far more likely to act on it. Another research study showed that many companies are experimenting with AI, but without a clear plan for integrating it into finance operations. This often comes down to leaders not fully understanding what AI is or how it actually works.

This confidence gap only closes when AI explainability is part of the picture.

AI is being deployed across virtually every corner of finance, from credit decisions and fraud detection to quarterly forecasting and board reporting. But the explainability challenge looks different depending on where in the finance function you sit. The six applications below cover the full spectrum, from banking and lending contexts where regulatory pressure is most acute, to FP&A workflows where the question isn't compliance. It's whether your CFO can trust and act on what the AI is telling them.

Infographic showcasing six explainable AI in finance applications

What explainable AI in finance enables: Techniques like SHAP and LIME—tools that break down model reasoning—surface exactly which factors drove an approval or rejection: income level, credit history, debt-to-income ratio, and how heavily each was weighted. This makes AI credit decisions auditable, open to challenge, and legally defensible.

Who benefits: Lenders, compliance officers, credit risk teams, and loan applicants

What good looks like: A rejected applicant receives a specific, actionable explanation: "If your debt-to-income ratio were 5% lower, this application would have been approved." That's the power of counterfactual explanations, not just a verdict, but a path forward. Under the EU AI Act and the U.S. Equal Credit Opportunity Act, lenders must be able to explain adverse decisions. A black-box model can't meet that bar.

|

💡 Pro Tip: Counterfactual explanations are especially powerful in credit decisions. They give applicants something actionable, not just a rejection, and give compliance teams a defensible, auditable paper trail. |

What explainable AI in finance enables: Explainable AI moves fraud detection beyond the alert, showing analysts why a transaction was flagged, which data patterns triggered the model, and how confident the system is in its classification. That context is what makes alerts actionable instead of overwhelming.

Who benefits: Compliance teams, anti-money laundering (AML) analysts, risk operations, and financial crime units

What good looks like: An analyst reviewing a flagged transaction sees: "Flagged due to unusual cross-border transfer pattern—3 transactions over 48 hours to a new payee, 340% above account average." They can validate or dismiss the alert in seconds instead of investigating blind.

|

💡 Pro Tip: Without explainable AI, fraud models can become noise machines, flagging so many transactions that analysts stop trusting them and start overriding alerts by default. Explainability is what allows teams to calibrate thresholds with confidence and actually reduce false positive volume over time. |

What explainable AI in finance enables: Explainable AI surfaces which market signals, valuation factors, or correlations drove an AI-generated asset allocation recommendation or risk assessment, giving portfolio managers the ability to evaluate the logic before acting on it, rather than simply following the output.

Who benefits: Portfolio managers, risk officers, investment committees, and institutional compliance teams

What good looks like: Instead of "Reduce exposure to sector X," an explainable system shows: "Recommendation driven by rising correlation with credit spreads (42%), declining earnings momentum (35%), and elevated volatility relative to benchmark (23%)." The manager can agree, challenge, or override, with full visibility into the reasoning.

|

💡 Pro Tip: The CFA Institute's 2025 report on explainable AI in finance warns that in investment and portfolio management specifically, lack of explainability combined with model "hallucinations" can lead to "misinformed decisions and financial losses", even when the model's historical track record looks strong. Past performance doesn't make a black box safe. |

What explainable AI in finance enables: Explainable AI in forecasting lets finance teams see exactly which drivers the model weighted—revenue trends, headcount changes, pipeline velocity, market conditions—and by how much. That visibility makes it possible to challenge assumptions, adjust inputs in real-time, and run scenario analyses that leadership can interrogate and defend.

Who benefits: FP&A managers, CFOs, financial controllers, and board members who rely on forecast outputs for capital allocation decisions

What good looks like: Instead of "Q3 forecast: $4.2 million," an explainable FP&A system shows: "Forecast driven by 60% historical seasonality, 25% pipeline velocity, 15% trailing headcount. Key risk: if pipeline conversion drops below 22%, forecast falls to $3.8 million." That's not just a number. It's an output your CFO can interrogate, adjust, and defend.

|

💡 Pro Tip: Explainable forecasts make what-if analysis genuinely useful. When you can see which drivers were weighted, you can change them and immediately see the impact. That's the difference between a forecast that informs decisions and one that just reports them. |

What explainable AI in finance enables: When AI flags a budget variance, an explainable system doesn't just surface the number, it explains the specific driver behind it. Finance teams can communicate context to leadership instead of arriving at a meeting with an alert and no answer.

Who benefits: FP&A teams, financial controllers, CFOs, and audit committees who need to understand and present variance drivers, not just variance figures

What good looks like: AI flags that marketing spend is up 18% versus budget. An explainable AI system shows: "Variance driven by $120K Q3 conference spend not included in the original budget. This is not a recurring overspend pattern." That single sentence changes the entire board conversation.

|

💡 Pro Tip: Natural language variance explanations, where the AI generates the narrative alongside the numbers, is one of the most practical and high-value explainable AI applications for FP&A today. If your planning tool flags everything but explains nothing, it's creating work, not reducing it. |

What explainable AI in finance enables: Explainable AI provides a complete, traceable audit trail for every forecast, report, and variance analysis, showing data sources, model logic, and any manual adjustments made. Every number becomes traceable from ERP input to board-level output, without the finance team having to manually reconstruct the logic.

Who benefits: Finance teams, external auditors, internal audit functions, CFOs, and compliance officers in regulated industries

What good looks like: An auditor queries a revenue figure in the board report. The system immediately surfaces the data lineage: source system, transformation logic, model assumptions, and any overrides applied. The audit takes hours, not days, and the finance team spends the meeting on strategy, not defense.

|

💡 Pro Tip: Audit readiness and explainability aren't separate workstreams. They're the same capability viewed from two angles. If your AI reporting tool can't show complete data lineage from source to output, it's adding governance risk, not reducing it. |

You don't need to understand the math behind these explainable AI techniques to use them well. But knowing what they do, and what their limits are, will help you ask better questions when evaluating AI-powered finance tools.

|

Technique |

What It Does in Plain English |

Best For |

|

SHAP (SHapley Additive Explanations) |

Assigns a contribution score to each input variable, showing how much each one (revenue trend, headcount, pipeline data) pushed the final output up or down |

Understanding which drivers matter most across forecasts and model outputs |

|

LIME (Local Interpretable Model-agnostic Explanations) |

Builds a simpler model around a single prediction to approximate why the complex model reached that specific conclusion |

Explaining individual decisions without needing to expose the full model |

|

Counterfactual Explanations |

Answers: "What would have had to change for the output to be different?" — e.g. "If pipeline conversion were 3% higher, the Q3 forecast would increase by $280K" |

Scenario planning, credit decisions, and any situation requiring actionable what-if reasoning |

|

Interpretable (Ante-hoc) Models |

AI built on transparent algorithms like decision trees or linear regression; every step of the logic can be followed from input to output |

High-risk compliance use cases where full auditability is non-negotiable |

There's an inherent tension at the heart of AI explainability: the more complex a model, the more accurate it tends to be, and the harder it is to explain. A deep learning model might predict cash flow with impressive precision, but offer little visibility into how it got there. A decision tree is fully transparent but may miss patterns a neural network would catch.

This isn't a problem to solve. It's a tradeoff to manage. The use case determines which side matters more:

The right question to ask your AI vendor isn't "how accurate is your model?" It's "how does your model explain itself, and to whom?"

Most AI-powered FP&A platforms will tell you their models are explainable. Fewer will show you what that actually means in practice. These four questions cut through the marketing language and get to what matters before you're in a board meeting wishing you'd asked them sooner.

The strongest signal of genuine explainability in an FP&A tool isn't a confidence score or a feature importance chart. It's whether the system can generate a plain-English explanation alongside the number. Not "variance: +18%," but "marketing spend is up 18% versus budget, driven by a Q3 conference cost not included in the original plan."

Ask your vendor directly: "If your AI flags a variance or revises a forecast, does it produce a written explanation of which drivers caused the change?" If the answer is a dashboard with color-coded bars and no narrative, that's a black box with better UX, not a genuinely explainable system.

A forecast your team can't interrogate is a forecast your team can't defend. Explainable forecasting means the model's inputs and weightings are visible, and changeable. Your team should be able to see that the Q3 revenue forecast weighted pipeline velocity at 25%, question that assumption, adjust it, and immediately see the impact on the output.

Tools that deliver a forecast number without exposing the underlying assumptions are black boxes, regardless of their accuracy track record. Accuracy you can't interrogate isn't an asset in a CFO presentation, it's a liability. When evaluating vendors, ask for a live demonstration of assumption adjustment, not just a feature checklist.

Explainability without documentation is incomplete, and in regulated industries, it's a governance risk. A best-in-class FP&A tool should maintain full data lineage from ERP source to final board report: every data transformation, model run, input change, and manual override logged, timestamped, and accessible for internal review or external audit.

This matters beyond compliance. When your auditors query a revenue figure or your CFO asks how last quarter's forecast was built, your team should be able to pull the full history in minutes, not reconstruct it from memory and spreadsheets. If a vendor can't clearly explain how their system logs model decisions and data transformations, treat that as a red flag.

True explainability isn't built for data scientists. It's built for CFOs, board members, and auditors who need to understand and act on AI outputs without a technical interpreter in the room. There's a meaningful difference between a system that explains itself in finance language and one that surfaces model-speak.

Finance language sounds like: "Forecast confidence is lower this quarter due to higher variance in pipeline conversion rates over the last 60 days." Model-speak sounds like: "Feature weight distribution shifted; SHAP values indicate high sensitivity to input variable 3."

Both may be technically accurate. Only one is useful to the people making decisions. When evaluating tools, ask your vendor to walk a non-technical stakeholder, for example, a CFO or controller, through an AI-generated explanation without a demo script. How that goes will tell you everything.

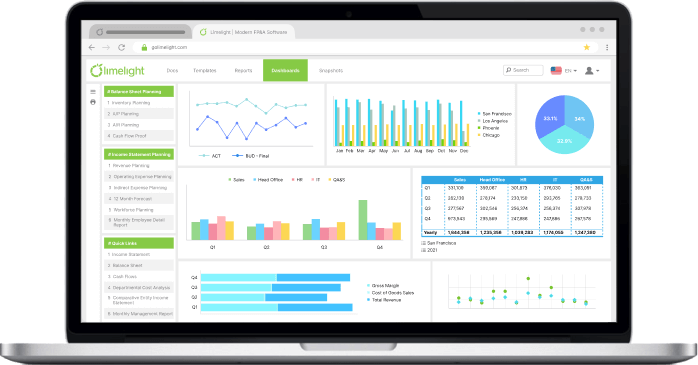

Most FP&A platforms have added AI. Fewer have built it with explainability at the center. Limelight is a cloud-based, Excel-free FP&A platform designed specifically for finance teams, and its AI features are built around a straightforward principle: every number should come with a story your CFO can actually use.

Here's how that maps to the four evaluation criteria:

The results speak for themselves. Triple Crown Sports uses Limelight to analyze KPIs across events and deliver board-ready insights in minutes. Communication Service for the Deaf cut its budget cycle in half after implementation.

Finance teams exploring explainable AI in FP&A can see how it works in practice with a demo.

Explainable AI (XAI) in finance refers to AI systems designed so that their outputs— forecasts, decisions, classifications—can be understood, interpreted, and audited by humans, not just produced. The contrast with traditional "black-box" AI is straightforward: a black-box model might generate a Q3 revenue forecast of $4.2M with no visibility into what drove it, while an explainable system shows exactly which inputs—pipeline velocity, seasonality, headcount—were weighted and by how much. In practice, explainable AI applies across credit scoring, variance analysis, fraud detection, and financial forecasting; basically, anywhere a finance team needs to explain not just what the AI said, but why.

Explainability matters for three interconnected reasons: regulatory compliance, stakeholder accountability, and error detection. Regulators are tightening requirements. The EU AI Act mandates explainability for high-risk AI systems including credit scoring, and GDPR already gives individuals the right to an explanation for automated decisions affecting them. Internally, finance teams that can't explain AI-generated forecasts or variance flags to boards and auditors face a credibility problem that no level of model accuracy can fix. And without visibility into model logic, errors in training data or flawed assumptions can compound quietly across every forecast and report until they surface at the worst possible moment.

The four most common XAI techniques in finance are SHAP, LIME, counterfactual explanations, and interpretable models. SHAP (SHapley Additive Explanations) assigns a contribution score to each input variable, showing how much each one moved the final output up or down, making it the most widely used technique in financial AI today. LIME (Local Interpretable Model-agnostic Explanations) builds a simpler model around a single prediction to approximate why a complex model reached that specific conclusion. Counterfactual explanations answer "what would have had to change for the output to be different?", for example, "if pipeline conversion were 3% higher, the Q3 forecast would increase by $280K", making them especially useful for scenario planning and credit decisions. Interpretable models like decision trees and logistic regression build transparency directly into the model architecture, making every step of the logic followable from input to output.

The EU AI Act classifies credit scoring, lending decisions, and risk assessment AI as high-risk systems, which means they are subject to mandatory explainability, transparency, and human oversight requirements. Prohibited AI practices came into effect in February 2025, and the full high-risk system obligations, including explainability requirements, apply from August 2, 2026. Importantly, the Act applies broadly: any financial institution whose AI outputs are used in EU contexts falls within scope, regardless of where the firm is headquartered. Finance teams using AI in credit, risk, or investment decision-making should be actively assessing compliance readiness now, not in 2026.

The most reliable way to evaluate an FP&A tool's explainability is to apply four practical tests before committing. First, ask whether the AI generates natural language explanations alongside numbers. Second, check whether model assumptions are visible and adjustable in real-time, so your team can interrogate and change inputs rather than just accept outputs. Third, verify that the tool maintains a complete audit trail from ERP data source to final report; every transformation, model run, and adjustment is logged and accessible. Finally, test whether a non-technical stakeholder—a CFO or board member—can understand the AI's explanations without a technical interpreter in the room. If any of these four tests fail, the tool's explainability claims deserve closer scrutiny.

Subscribe to our newsletter